The Illusion of the Infinite Scroll

In 2021, whistleblower Frances Haugen leaked 800 pages of internal documents known as The Facebook Files. These papers revealed that Meta knew Instagram was “toxic” for teen girls, specifically regarding body image. The “heat maps” weren’t just about clicks; they were about “time spent” and social media algorithm manipulation psychology.

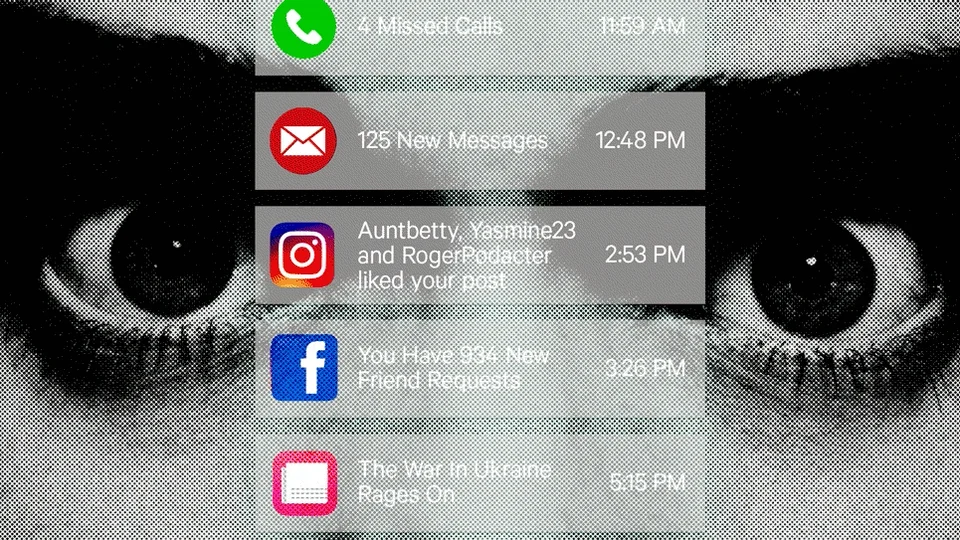

You aren’t browsing preferences. You are navigating a system built on “Variable Ratio Reinforcement,” a concept refined by B.F. Skinner in 1952. This is the digital version of the Vegas Strip. Casinos remove clocks to erase time. Algorithms remove the “stopping cue” the natural end of a page to erase your agency.

This isn’t new. In 1998, Amazon’s “Collaborative Filtering” began predicting what you wanted. By 2006, Netflix’s Cinematch shifted from recommending movies to optimizing for “retention.” The goal evolved from utility to addiction.

The attention economy prioritizes arousal over wellbeing. Haugen’s evidence proved that Facebook’s 2018 algorithm change weighted “angry” reactions five times more than “likes.” Anger drives engagement. TikTok’s “For You” page uses a similar loop of social media algorithm manipulation psychology, which researchers at the University of Southern California identified as a primary driver of “doomscrolling” in 2022.

The machinery is precise

Why Your Preferences Are Actually Predictions

Amazon deployed its first large-scale collaborative filtering system in 1998. It mapped millions of users to suggest books. This shifted the goal from serving a user to predicting their next impulse.

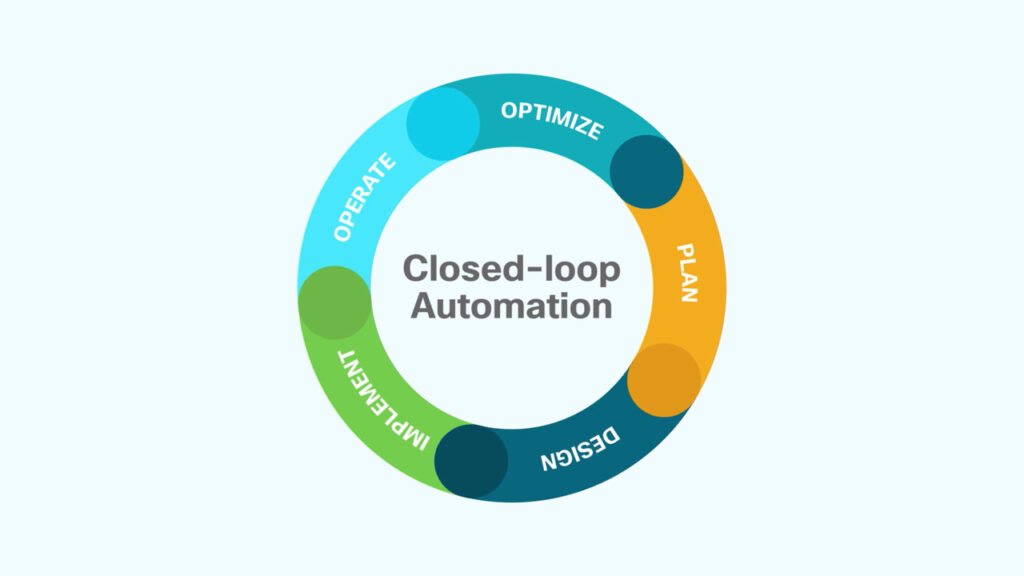

The system uses stochastic gradient descent to minimize the error between a predicted rating and an actual click. This mathematical optimization doesn’t just guess; it narrows the range of possible inputs. By rewarding high-probability engagement, the algorithm creates a feedback loop. This is the digital Panopticon and a prime example of social media algorithm manipulation psychology. Jeremy Bentham described this prison in Panopticon; retrospective (1791). The prisoner self-regulates because the guard’s gaze is invisible but constant. In the digital version, the “gaze” is the optimization function. You don’t change your behavior because a guard is watching, but because the algorithm removes the options you didn’t choose.

The system stops reflecting who you are and starts dictating who you will become by narrowing the horizon of what you see.

By 2012, Facebook’s EdgeRank algorithm prioritized high-engagement content over chronological order. This turned the feed into a predictive engine based on social media algorithm manipulation psychology. Much of this processing occurs at the Meta data center in Prineville, Oregon, where thousands of servers execute the weights of these predictive models. You aren’t managing a feed. You are following a path designed to prevent you from leaving.

The Dopamine Loop and Social Media Algorithm Manipulation Psychology

Amazon launched its first recommendation engine in 1998. It mapped book preferences. Today, that logic has morphed into a psychological trap. TikTok differs from early algorithms. It employs a “variable reward schedule” with millisecond precision. While Facebook relied on social validation, TikTok’s For You page optimizes for the “flow state.”

The brain releases dopamine during anticipation, not the reward. In The Molecule of More, Daniel Z. Lieberman explains that dopamine drives the pursuit of the “new.” TikTok engineers weaponized this. Unlike the linear feeds of 2010, TikTok uses a high-frequency feedback loop. It analyzes “watch time” and “re-watch rate.” This delivers a hit of novelty every 15 to 60 seconds.

The attention economy treats human focus as a raw material to be mined, refining curiosity into a predictable loop of engagement.

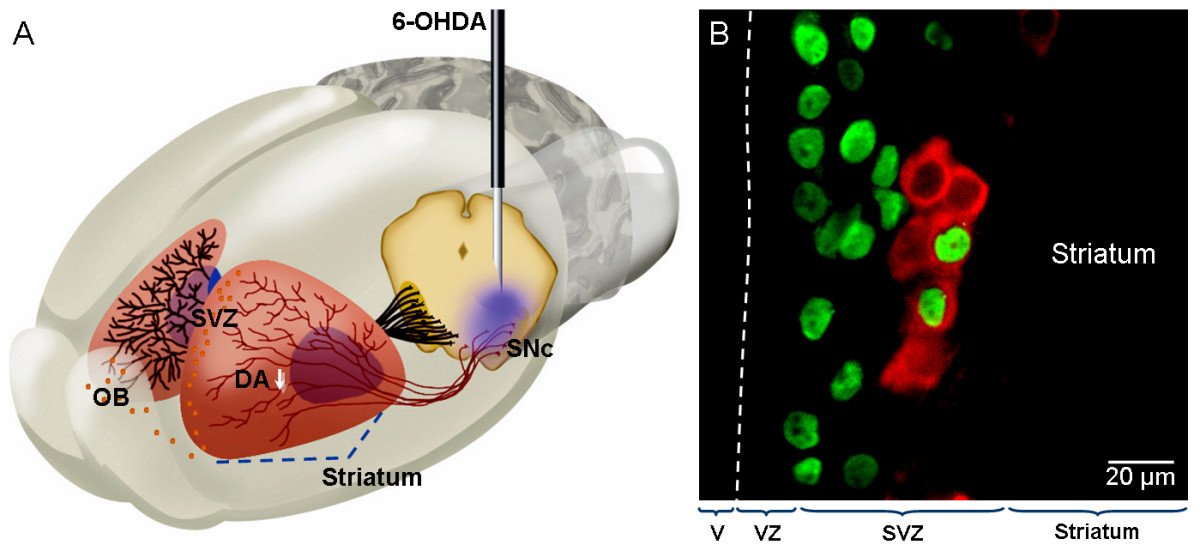

This engineering bypasses the prefrontal cortex. A 2019 study in Nature Communications found that short-form video consumption triggers immediate reward responses in the ventral striatum. This mirrors B.F. Skinner’s 1953 experiments on “intermittent reinforcement.” The swipe is the lever. The result is stark: a 2023 study in The Lancet linked heavy social media use to a 33% increase in depressive symptoms among adolescents.

Q: How does TikTok’s algorithm differ from early recommendation engines?

A: TikTok’s algorithm differs from early engines. It focuses on passive consumption rather than active preference. 1998-era systems suggested items based on past purchases. TikTok uses real-time biometric signals like watch duration. This creates a variable reward schedule. It triggers dopamine during the anticipation of the next video and effectively automates the user’s attention.

Q: What is a variable reward schedule in social media?

A: A variable reward schedule is a psychological mechanism where a reward is delivered at

Variable Rewards: The Casino in Your Pocket

B.F. Skinner placed a pigeon in a box in 1948. In his study, Schedules of Reinforcement, he discovered that birds received food at unpredictable intervals. This caused them to develop a compulsive obsession. The bird pecked frantically for the next hit, regardless of hunger.

Engineers at Meta and ByteDance replaced pellets with notifications. This is a variable ratio schedule and a key part of social media algorithm manipulation psychology. The dopamine spike occurs in the ventral striatum. This is the brain’s reward center. The spike happens during the anticipation of the reward, not the receipt of it.

The brain ignores predictable rewards. It craves the uncertainty of a potential win.

By 2012, Instagram decoupled the act of posting from the delivery of likes. Former Google design ethicist Tristan Harris revealed that these platforms use “intermittent reinforcement.” This maximizes time-on-device. The system doesn’t deliver likes instantly; it batches them. The algorithm holds rewards back and releases them in bursts. This mimics the “near-miss” effect of a slot machine. This outperforms steady rewards. It prevents “reward satiety” and keeps the user in a state of perpetual craving. The pull-to-refresh gesture is the digital lever. It triggers a chemical rush that overrides the prefrontal cortex’s impulse control, leveraging social media algorithm manipulation psychology.

Engineering the Echo: How Algorithms Narrow the Human Experience

Amazon deployed the first large-scale collaborative filtering system in 1998. It mapped user preferences to suggest books. Today, TikTok evolves this into a precision tool. The system tracks millisecond-level dwell time. In a 2020 internal study, ByteDance revealed their algorithm identifies subconscious preferences. These preferences appear before a user consciously recognizes them.

This is not just a tool; it is a psychological mirror. The system reflects existing biases. This creates a filter bubble where opposing views vanish. This mirrors the 17th-century salon culture of Paris. Figures like Madame de Sablé curated guests. They ensured their specific philosophies remained unchallenged. They insulated their intellects from the friction of dissent.

The attention economy transforms human curiosity into a predictable stream of data for profit.

The human experience narrows. Meta’s internal research leaked in 2021 via the Facebook Files. It showed the 2018 algorithm change amplified “angry” reactions. It weighted them more heavily than “likes.” This prioritized outrage over nuance. This is a prime example of social media algorithm manipulation psychology. Users stop encountering perspectives and instead see caricatures. This isolation is the product. As these loops tighten, cognitive friction disappears.

The internet now browses the user. The algorithm decides what is true by deciding what is seen. This fundamentally shifts the boundaries of perceived reality through social media algorithm manipulation psychology.

The Invisible Hand of Social Media Algorithm Manipulation Psychology

Amazon deployed its first collaborative filtering recommendation engine in 1998. It was a tool for efficiency. Then came 2006, when Facebook launched the “News Feed.” While initially chronological, the internal shift toward “EdgeRank” began shortly after. By 2007, the goal shifted. The priority was no longer organizing information, but maximizing “time spent” through social media algorithm manipulation psychology.

Engineers in Menlo Park applied “variable reward schedules,” a mechanism from B.F. Skinner’s 1948 work, The Behavior of Organisms. This is the engagement lever. The “pull-to-refresh” gesture mimics a slot machine. Users seek a dopamine hit, mirroring the pigeons in Skinner’s box who pecked a disc for pellets delivered at random intervals.

Once the user is hooked, a second lever activates: content curation.

“The filter bubble is a personal ecosystem of information,” Eli Pariser wrote in his 2011 book, The Filter Bubble.

This is where engagement meets cognitive bias and social media algorithm manipulation psychology. By 2016, these systems didn’t just keep users online; they curated political realities. This leverages confirmation bias. When an algorithm suppresses contradictory evidence, the brain stops seeking alternatives. Users feel they are choosing, but a line of code in California has already pre-selected the options. The choice is an echo.

From User to Product: The Economics of Attention Extraction

Amazon launched its first large-scale recommendation engine in 1998. This shifted the internet from a static directory into a predictive tool. That shift created a blueprint. If you can predict what a user wants to buy, you can control their behavior. By the 2010s, this “predictive shopping” logic evolved into behavioral extraction. The goal moved from selling a product to selling the user’s future actions.

Meta and ByteDance don’t sell software; they sell certainty. Meta relies on a “social graph.” This tracks who you know and what you like. ByteDance uses a content graph. The TikTok algorithm analyzes “micro-signals,” such as the exact millisecond a user swipes away. It does this to map subconscious triggers. This is a technical leap from Meta’s interest-based targeting to real-time neurological mirroring.

The cost is documented. In 2021, whistleblower Frances Haugen leaked the Facebook Files. These revealed that Meta knew Instagram’s architecture harmed teenage girls’ body image. Internal documents showed that engagement metrics prioritized “meaningful social interaction.” In practice, this meant amplifying outrage. High-arousal emotions, specifically anger, keep users stationary.

The goal is no longer to connect people, but to keep them receptive to targeted ads.

This isn’t an accident. It is the refined economics of extraction.

The Cognitive Cost of Outsourcing Curiosity

Amazon deployed its first large-scale collaborative filtering system in 1998. It did more than suggest books. It began outsourcing human curiosity to a machine. By the time Netflix scaled its “Cinematch” algorithm in 2002, the shift was complete. Users stopped searching for the unknown. They accepted predicted results.

This is the engine of algorithmic manipulation. When a system decides your feed, it narrows your cognitive horizon. You are no longer browsing a library. You are walking a pre-defined path. This mirrors the curated exclusivity of the Salon de St. Honoré in 18th-century Paris. In these spaces, hosts like Madame Geoffrin filtered guests to ensure social harmony. Challenging ideas were excised to protect the status quo.

Digital filters do the same. They create a feedback loop that suppresses the brain’s dopaminergic response to novelty. According to the Journal of Neuroscience, the ventral striatum triggers curiosity when we encounter “prediction errors.” Algorithms remove these errors. They replace discovery with a loop of the expected.

The shift from active discovery to passive consumption transforms the user from an explorer into a recipient of a calculated stream.

This kills serendipity. In 2014, researchers like Zeynep Tufekci analyzed how algorithmic filtering limits exposure to divergent views. When the machine optimizes for “engagement,” it removes the friction required for critical thinking. Users trade intellectual autonomy for a smooth feed. They ignore that growth happens only when they encounter something that challenges them.

Who Is Actually Making Your Decisions?

Efficiency costs us our autonomy. We believe we choose our content. However, we are actually reacting to “choice architecture” and social media algorithm manipulation psychology. This isn’t vague influence. It is a calculated narrowing of the world. One option eventually feels like the only logical choice.

This architecture scaled in 1998 when Amazon launched its item-to-item collaborative filtering. It transformed a bookstore into a laboratory for behavioral economics. The system leverages what B.F. Skinner detailed in Science and Human Behavior (1953): variable-ratio reinforcement. The algorithm delivers rewards at unpredictable intervals. This triggers dopamine releases in the nucleus accumbens. It mimics the neurological hook of a slot machine.

Modern systems, like TikTok’s “For You” page, use deep reinforcement learning to optimize for “dwell time.” This metric doesn’t measure satisfaction. It measures biological capture and social media algorithm manipulation psychology. Researcher Tristan Harris argues this creates a “race to the bottom of the brain stem.” It bypasses the prefrontal cortex to trigger primal impulses. The “self” is no longer the driver when the machine predicts your next desire. We have become data points optimized for engagement. We are losing the cognitive capacity to desire what the code has not already suggested.

What would change if more people knew this? Share your perspective.

Frequently Asked Questions

Q: What is social media algorithm manipulation psychology?

A: Social media algorithm manipulation psychology is the study of how platforms use behavioral data to influence user decisions and emotions. Tech companies employ “persuasive design” to trigger dopamine releases through variable rewards, such as likes and notifications. By analyzing your digital footprint, these systems predict which content will keep you scrolling. This creates a feedback loop that alters your perception of reality by prioritizing high-arousal emotions over factual accuracy.

Q: Why does social media algorithm manipulation psychology matter for mental health?

A: Understanding social media algorithm manipulation psychology matters because it reveals how digital environments can distort self-esteem and cognitive function. These algorithms often amplify “outrage” or “perfection,” leading users to compare their internal struggles with a curated, artificial reality. Over time, this constant exposure to skewed data can increase anxiety and polarization. When the system prioritizes engagement over well-being, the user’s psychological autonomy is quietly eroded for the sake of profit.

Q: Is it a misconception that we control our social media feeds?

A: A common misconception regarding social media algorithm manipulation psychology is the belief that users have total control over their feeds. While you may follow specific accounts, the “For You” or “Discovery” pages are governed by black-box models. These models use “collaborative filtering” to push content you didn’t ask for but are psychologically primed to consume. You aren’t choosing the content; you are reacting to a curated stream designed to maximize time-on-site.

Q: How does the history of behavioral psychology relate to today’s algorithms?

A: The roots of social media algorithm manipulation psychology lie in B.F. Skinner’s work on “operant conditioning” in the 1930s. Skinner discovered that “variable ratio schedules”—rewards delivered at unpredictable intervals—create the strongest habit formation. Modern platforms applied this to the “pull-to-refresh” mechanism. By making the reward (a new post or notification) unpredictable, platforms turn a simple app into a digital slot machine that exploits basic human biological drives.

Q: What is a surprising fact about how algorithms manipulate our beliefs?

A: A surprising fact about social media algorithm manipulation psychology is the “rabbit hole” effect, where systems push users toward increasingly extreme content to maintain engagement. In 2018, researchers found that recommendation engines often suggest more radical versions of a user’s existing interests. This happens because extreme content triggers stronger emotional responses. The algorithm doesn’t have a political agenda; it simply knows that anger and shock keep you clicking longer than nuance does.